Ensuring Fair, Consistent & Bias-Free Evaluations

How HR leaders can design performance appraisals that eliminate bias, ensure consistency across managers, and build trust in the review process.

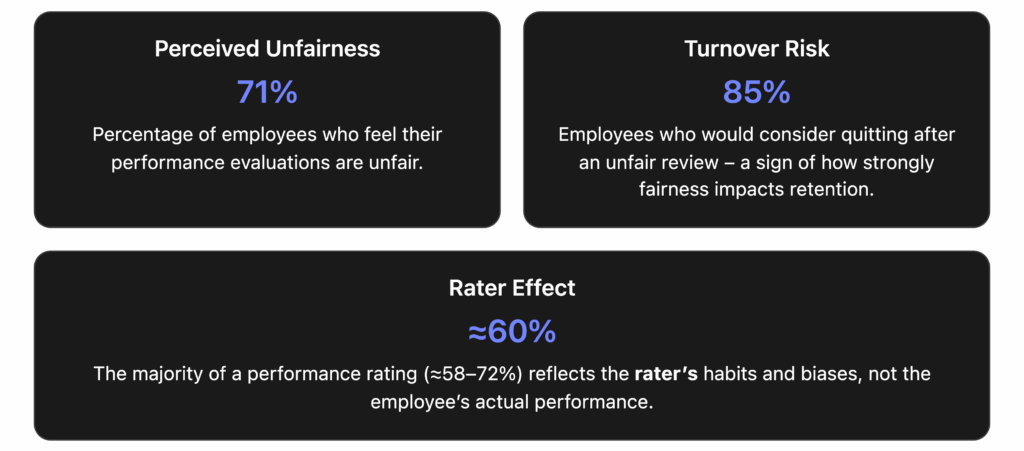

Employee fairness in performance reviews is a critical concern for organizations today. If appraisals are perceived as biased or inconsistent, the damage is real: top talent becomes disengaged, trust in leadership erodes, and retention suffers. Unfortunately, research shows fairness is exactly where many performance management systems fall short. One survey of Fortune 1000 companies found 71% of employees felt their performance evaluations were unfair [1]. In another poll, 85% of workers said they would consider quitting if they felt they received an unfair performance review [2]. And it’s not just employees – 90% of HR leaders admit annual reviews fail to accurately reflect employee contributions [3], and 95% of managers are unhappy with their performance review processes [4]. These numbers paint a clear picture: traditional reviews are too often seen as biased, subjective, or inconsistent, undermining their credibility.

Why do today’s performance appraisals so often miss the mark on fairness? Common pain points include inconsistent standards between managers and a host of cognitive biases (like recency bias, where recent events skew the review). In fact, studies find that idiosyncratic rater effects – differences in how individual managers evaluate – account for far more variance in ratings than the employee’s performance itself [5]. In one study of 5,000 managers, only 21% of the variation in ratings was explained by the employee’s actual performance; 62% was explained by who the manager was [6]. In other words, the same employee could be rated very differently by two different managers, which is the definition of unfairness.

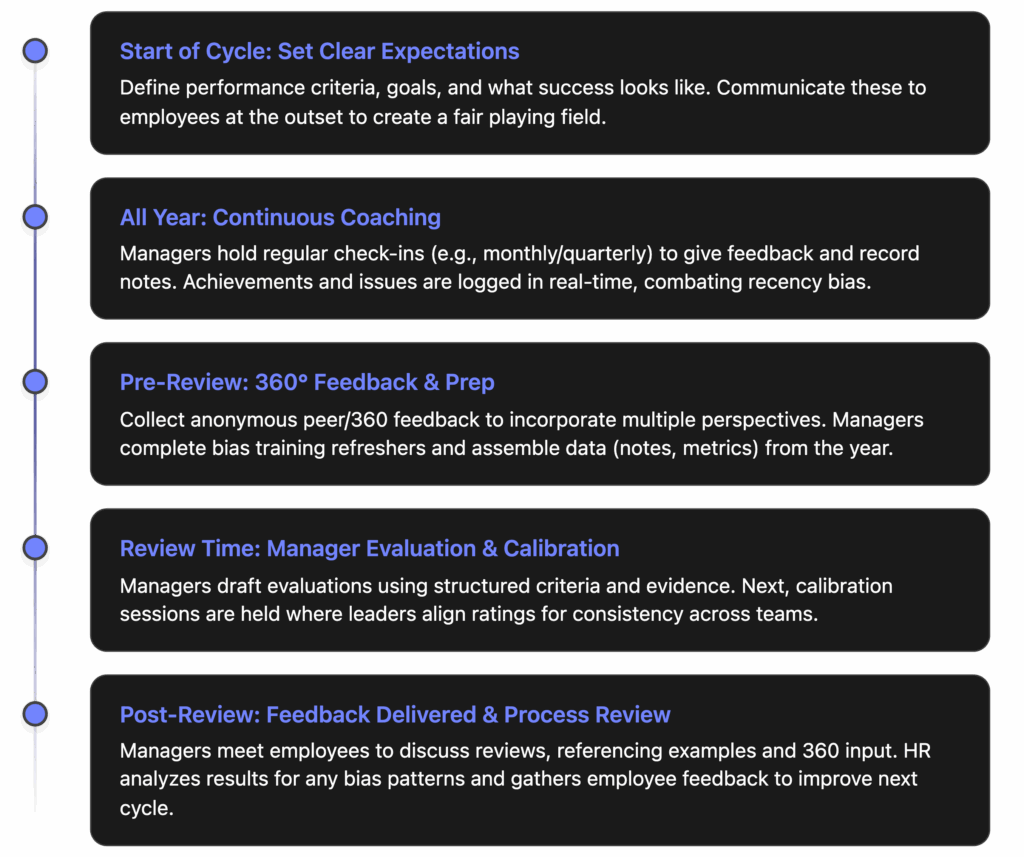

Below we’ll explore why these biases and inconsistencies arise – and, crucially, how to fix them. We’ll discuss strategies to design and use performance appraisal tools that mitigate bias, including: structured criteria to reduce subjectivity, calibration sessions to align standards, anonymous 360° feedback to broaden perspectives, and year-round data tracking to avoid recency bias. We’ll also highlight the importance of training managers to recognize their biases and use objective data when evaluating. Throughout, we provide practical tips and cite industry best practices (from academic research and HR thought leaders) to help you ensure your reviews are merit-based and transparent. By implementing these approaches – and leveraging tools like SuiteVal to support them – you can build a performance review process that employees trust and find fair.

The Bias Problem: Why Traditional Reviews Feel Unfair

Before diving into solutions, let’s unpack the common biases and inconsistencies that plague performance reviews. Recognizing these failure points is the first step to fixing them:

- Recency Bias: A tendency to overweight recent events (the last few weeks or months) in a review period, while forgetting or glossing over earlier performance [7]. For example, if an employee had a stellar year but made a visible mistake in the final week, a recency-biased manager might give an unduly low rating, effectively ignoring months of good work. Recency bias is often cited as the most common review bias in organizations [8].

- Halo/Horns Effect: The halo effect is when one positive quality or achievement outshines everything else, leading a manager to give inflated ratings across the board [9]. For instance, an employee who excelled in one big project might get top marks in unrelated areas just because of that “halo.” The horns effect is the opposite – one weakness or mistake drags down an otherwise decent evaluation [10]. These effects happen when managers don’t evaluate each aspect of performance independently.

- Leniency/Severity Bias: Some managers are “easy graders” who consistently rate everyone high (leniency bias), while others are stingy with high scores (severity bias) [11]. These personal tendencies skew ratings. If one department’s manager is lenient and another’s is harsh, an average performer could be rated “exceeds expectations” in one team but “needs improvement” in the other – an obvious unfairness if left unchecked.

- Central Tendency Bias: The reluctance to give very high or very low ratings, clustering everyone in the middle (“meets expectations”). A manager might avoid difficult conversations by marking even strong or weak performers as average. This muddies the distinctions in performance and can demotivate high achievers who don’t see their extra effort recognized.

- Similarity (Affinity) Bias: Managers may favor people who are similar to themselves or whom they personally get along with, consciously or unconsciously [12]. Shared background, interests, or personality can lead to higher ratings (an “affinity bias”), whereas those with different styles might be undervalued. This bias can particularly disadvantage employees from different demographic groups, undermining diversity and inclusion efforts [13].

- Spillover (Past Performance) Bias: Essentially the inverse of recency bias – here a manager’s past perception of the employee (positive or negative) spills over into the present, even if the current period tells a different story [14]. For example, an employee who struggled last year might still be rated poorly this year despite significant improvement, because the manager’s mental model hasn’t caught up (or vice versa).

- Attribution Bias: Attributing performance outcomes to personality/character rather than situational factors – e.g. assuming an employee missed a deadline because of laziness (character) rather than, say, because they were waiting on input from someone else (situation). This can lead to unfair judgments especially if different standards are applied to different people.

These biases are often unintentional – they’re human nature. But they have serious consequences. Biases can result in mis-rating employees, giving them feedback that is not truly reflective of their work. Over time, this erodes morale and trust: employees who sense bias or inconsistency in evaluations report significantly lower job satisfaction and are more likely to leave [15]. It also hurts organizational performance, as high performers may be overlooked and development needs misdiagnosed. Perhaps most alarmingly, biased reviews can reinforce systemic inequalities – for instance, research shows women’s performance feedback often contains more vague or critical notes on personality, which can stall advancement [16].

Rating inconsistencies between managers – even without overt bias – are another fairness killer. If each manager has their own interpretation of what a “4 out of 5” means, or what “meets expectations” entails, performance scores become a lottery of who your boss is. This is why experts emphasize the need to standardize and calibrate the review process across the organization. We will discuss how to do that shortly.

| Common Bias | How It Skews Reviews | Mitigation Strategy |

|---|---|---|

| Recency Bias | Focus on most recent performance, forgetting earlier achievements or issues. | Track feedback year-round; use notes or quarterly check-ins so the full year is considered. |

| Halo/Horns Effect | One strong or weak aspect influences all other ratings. | Use structured criteria, rate each competency separately; require evidence for each rating. |

| Leniency/Severity | Manager’s personal rating style is too easy or too harsh, distorting scores. | Calibration sessions among managers to normalize standards; clear rating definitions to guide scoring. |

| Central Tendency | Avoiding extreme ratings – everyone ends up “average”. | Allow use of full rating scale; train on rating differentiation; calibrate to ensure high/low performers are recognized. |

| Affinity Bias | Higher ratings to those similar or personally likable to the rater (and the opposite for “outsiders”). | Anonymize elements of review where possible; include multiple raters (360° feedback) to balance perspectives. |

| Spillover Bias | Past performance (good or bad) unduly influences current review. | Focus on current period only; use data from this review period; conduct separate retrospectives of improvements. |

By being aware of these biases, we can design appraisal systems to counteract them. The goal is an objective, consistent evaluation process – one that gives every employee a fair shot, regardless of who their manager is, and that bases assessments on actual performance rather than perception or memory quirks. In the sections below, we’ll outline key practices to achieve this fairness, and show how a modern tool like SuiteVal can help facilitate each one.

Clear, Structured Criteria to Reduce Subjectivity

Ambiguity is the enemy of fairness. When performance evaluation criteria are vague or left to individual interpretation, bias finds fertile ground. Many traditional review forms ask broad questions (“Evaluate the employee’s overall performance”) or use undefined rating labels (“exceeds expectations”) – forcing managers to rely on their personal judgment and memory [17] [18]. It’s no wonder different managers develop different standards. The solution is to make your performance criteria and rubrics as clear, specific, and structured as possible:

- Define Specific Competencies and Expectations: Break down “performance” into the key competencies or goals for the role, and define what success looks like for each. For example, instead of a generic trait like “Leadership,” define sub-components (e.g. “Coaches team members to improve their skills” or “Drives project X to completion on time”). For each competency, provide a description of expectations. When employees know exactly what they’re being evaluated on, and managers have a shared understanding of each category, subjectivity drops. Research emphasizes that a well-defined rubric is one of the best ways to drive objectivity and transparency in appraisals [19]. Managers and employees can then apply evidence to assess whether expectations were met [20].

- Use Observable, Objective Measures Where Possible: Whenever you can, anchor evaluations in objective outcomes or evidence. Numbers and specific results are harder to bias. For instance, include metrics like sales figures, project delivery timelines, error rates, customer satisfaction scores, goal completion percentages, etc., as part of the review data [21]. If a role is hard to quantify, at least record specific examples of work (e.g. “Implemented feature Y that improved site load time by 20%”). This pushes the discussion toward facts and achievements rather than personality impressions. It also helps in making the review forward-looking: if someone didn’t meet a goal, the conversation can be about how to improve those metrics, rather than vague criticisms.

- Standardize Rating Scales with Descriptors: If you use a numeric or labeled rating scale (say 1–5, or “Needs Improvement” to “Excellent”), provide clear definitions for each level on each criterion. One manager’s “4/5” might be another’s “3/5” absent a common frame of reference [22]. For example, what does “Exceeds Expectations” concretely mean for the competency “Communication”? Perhaps: “Consistently communicates complex ideas clearly and proactively shares updates without prompting.” The more you can describe what each rating level looks like in terms of behavior or results, the more aligned managers will be in applying them. Also avoid overly ambiguous labels – even terms like “meets expectations” can be clarified by linking to specific targets or behaviors. In short, make sure everyone is using the same yardstick.

- Structured Forms (No “Open Box” Fields Without Guidance): Free-form comment sections can be valuable for qualitative feedback, but without any guidance they can lead to divergent approaches. Studies warn that when managers are given only broad prompts and an empty box, their implicit biases are more likely to influence what they write [23] [24]. To counteract this, structure your forms with specific questions or prompts. For example, instead of a single open “General Comments” field, break it into: “Key accomplishments this period?”, “Areas for improvement with examples?”, etc. You can even include bias interrupter prompts on the form itself (as suggested by bias experts [25]), such as: “Did you consider the employee’s performance over the entire review period?” and “Can you cite at least two specific examples to support your assessment?” [26]. These reminders nudge managers to stay objective and comprehensive.

- Consistency Across Organization: Use the same evaluation template or framework for all managers (with allowances for role-specific criteria). This ensures every employee’s performance is being judged in a comparable way. Having a unified form also makes it easier to spot discrepancies and to discuss calibrations (more on that next). It’s no coincidence that leading companies that revamped performance management put a lot of effort into clearly communicating criteria and aligning everyone on how to assess those criteria [27].

Structuring your evaluation criteria and process in this way directly combats biases. For example, clearly defined criteria combat the halo/horns effect by forcing managers to evaluate each aspect separately – a halo in one area can’t automatically blanket the others. Requiring evidence and full-period consideration combats recency bias and spillover bias, because managers must look at notes and data from the whole cycle [28]. Standardized scales combat leniency/severity differences, since managers have agreed-upon definitions and can be held accountable to them.

Employees benefit from this clarity too: they know what is expected and see a fair basis for their rating. There’s greater transparency, which enhances their perception of fairness. No more feeling like reviews are random or driven by the manager’s mood; instead, reviews are clearly tied to documented goals and behaviors.

Calibration Sessions: Aligning Standards Across Managers

Even with structured criteria, humans can still diverge in how they apply them. Calibration sessions are a proven way to ensure that all managers share a common standard for ratings. In a calibration meeting, managers come together (often facilitated by HR) to review the distribution and reasoning of their performance ratings and adjust where necessary for consistency. The idea is to catch and correct any outlier tendencies – whether someone’s being too lenient, too harsh, or possibly influenced by bias – through group deliberation.

How Calibration Works: Typically, after managers draft their performance ratings, but before they are finalized, groups of managers (e.g., all within one department, and/or at the same level) meet to compare notes. They might look at all employees rated as “Exceeds Expectations” in the division and ensure that those employees indeed show exemplary traits at a similar level. If Manager A rated 5 of their 7 team members as “exceeding,” while Manager B rated only 1 of 7 that highly, the group will discuss why. Perhaps Manager A is over-rating or Manager B is under-rating, or their teams truly performed differently – but the discussion sheds light on those differences. Managers present the rationale for their decisions, often citing examples or data. Through discussion, some ratings might be normalized – e.g., Manager A might realize they’ve been too generous and downgrade a couple of those 5 ratings to better align with the common standard, or others might agree to adjust up if someone was undersold.

Benefits of Calibration:

- Greater Consistency: Calibration directly addresses the inconsistency problem. It’s essentially a moderated process of inter-rater alignment. If one manager’s definition of “excellent” was looser than others, this will become apparent and can be corrected. The result is that a rating (or a performance category) means roughly the same thing regardless of which manager gave it [29]. Employees perceive this as fair: knowing that there was a higher-level review for consistency assures them that it wasn’t all at the whim of one boss.

- Surfacing Bias and Outliers: The group setting can expose potential biases. For example, if during calibration it’s noticed that all of one manager’s top ratings went to employees of a certain group, or that another manager consistently scored one demographic lower, those patterns can be flagged (tactfully) and questioned. Peers can call each other out (again, diplomatically) on possible unfairness. One HR researcher noted that without calibration, managers might “interpret the same performance very differently,” and bias goes unchecked – whereas with a calibration committee, there’s an added layer of accountability [30]. It’s harder for bias to hide in a room of 10 people than in a one-on-one review.

- Fair Pay and Promotion Decisions: Calibration is especially vital if performance ratings tie into compensation, bonuses, or promotions. It ensures that those high-stakes decisions aren’t based on apples-to-oranges ratings. For instance, if two employees in different departments did an equally good job but one would have gotten a lower rating due to a stricter boss, calibration fixes that before it affects their merit raise. This helps maintain internal equity. In essence, calibration bolsters the procedural fairness of talent decisions.

- Shared Learning and Standards: Managers often learn from each other in calibration. They discuss what excellence looked like in different contexts, which can refine everyone’s understanding of the company’s performance standards. Over time, a culture develops where managers have a more unified vision of performance expectations.

It’s important to note that calibration must itself be structured to be effective. If done poorly, there’s a risk that a dominant personality in the room could sway everyone (introducing bias in another form), or that it becomes an exercise in forced ranking without context. To avoid this, establish ground rules: for example, require that any rating challenged in calibration must be discussed with evidence (not gossip or gut feel), ensure every manager gets a voice (so one viewpoint doesn’t dominate), and even consider having a neutral HR facilitator or a designated “bias watcher” in the room [31] [32]. Some organizations have each manager briefly present each of their team members: “Here’s the person, here’s what they achieved, here’s why I rated them as I did” – which both educates others and holds the manager accountable for justification.

Also, keep calibration focused on consistency and fairness, not politics. It’s not about managers defending their people at all costs; it’s about collectively ensuring that if two employees from different parts of the company have similar performance, they get similar recognition.

360° Feedback: Broadening Perspectives & Balancing Bias

Another powerful technique to ensure fairness is to widen the input beyond just the direct manager’s view. Enter 360-degree feedback. This refers to incorporating feedback from an employee’s peers, direct reports (for managers), and sometimes internal customers or other colleagues – essentially anyone who works regularly with the person – in addition to the manager’s evaluation. Typically, 360 feedback is collected anonymously via surveys or questionnaires, and then synthesized for use in the review.

Why 360° Feedback Promotes Fairness:

- Mitigates Single-Rater Bias: With only one evaluator, an idiosyncratic bias (like a personality clash or favoritism) can seriously skew a review. But if you have, say, 5-10 other people also providing input, any one individual’s bias gets diluted in the mix [33]. Maybe a manager unfairly downplays an employee’s contributions – but their peers might rate that employee highly on teamwork and impact, balancing the picture. Or vice versa: if a manager has a “halo” view and overlooks an employee’s flaws, peer feedback might bring up issues the manager missed. By averaging multiple viewpoints, the overall assessment is more objective and reliable [34].

- More Complete Performance Picture: Different colleagues see the employee from different angles. A manager sees output and results, peers see collaboration and day-to-day behavior, subordinates see leadership style, etc. Combining these gives a holistic view that a single person can’t fully grasp. For example, an employee might quietly be the go-to helper for teammates (which peers notice and value), even if their manager only sees their formal project outputs. If only the manager’s perspective counts, that important aspect could be missed. 360 feedback ensures good performance in any arena is recognized, and likewise consistent negative behaviors (like poor communication) will be called out by multiple sources if truly an issue.

- Reduces Recency and Specificity Biases: An individual rater might have a recency bias or only remember certain projects. But peers collectively will remember a wider range of interactions across the year. Their aggregated feedback tends to cover the whole period better. Also, peers often give very specific examples in their comments (since they recall specific incidents working with the person). All this context helps counter one person’s patchy memory or skewed focus.

- Anonymity Encourages Honesty: Because 360 input is usually anonymized, colleagues can be frank without fear of repercussion. This often surfaces truths that a direct report wouldn’t tell their boss face-to-face. It can unveil strengths (“John always stepped up to help me meet deadlines”) and developmental areas (“Several team members noted John could communicate updates more proactively”) that the manager may not have directly observed. It’s essentially crowdsourcing the feedback. One caveat: ensure enough respondents and anonymity so that feedback is truly candid. If a team is very small, you might aggregate at a higher level or use only parts of 360 to maintain confidentiality.

- Employee Perception of Fairness: Employees generally perceive a process that includes multiple voices as fairer than one based solely on their manager’s opinion. They know any one person can have blind spots or personal biases, so it’s reassuring that others had input. It also signals that the company values input from all directions (which is a hallmark of an open culture). And when employees get to see their 360 results, they often find it more credible and balanced (“okay, this isn’t just my boss picking on me; this is common feedback from my peers as well, so I should work on it”). Or if it’s praise, they know it’s widely recognized, not just one person’s favoritism.

Implementing 360-degree feedback effectively does require planning. You’ll need a mechanism (often a software tool) to collect feedback confidentially and aggregate it. Typically, HR coordinates the process: selecting who will provide feedback for each employee (often the employee and manager jointly decide on a list of reviewers to invite), sending out surveys, and compiling the results. Modern tools like SuiteVal simplify this: you can trigger 360 feedback rounds as part of the review cycle, and the software will automatically send questionnaires and compile the responses.

It’s wise to train employees and managers on how to handle 360 feedback – both giving and receiving it. Set expectations that the purpose is developmental and fairness, not a gossip session. Teach feedback givers to be constructive and objective. Teach managers how to integrate 360 feedback into their evaluation (e.g., how to weigh peer feedback vs their own observations). Also, ensure psychological safety: reaffirm that no retaliation or negative consequences will come from honest feedback given in good faith.

Continuous Feedback and Year-Round Data: Fighting Recency Bias

One of the simplest yet most effective fairness strategies is ensuring that performance reviews cover the entire evaluation period, not just the tail end of it. We identified recency bias earlier – it’s rife in annual reviews. The manager sits down to write the review and what’s top of mind? Whatever happened lately. To counter this, companies are shifting toward a continuous performance management approach: feedback and data collection happen throughout the year, so that at review time, both manager and employee have a rich record of what happened across the months.

Here’s how you can put this into practice:

- Encourage (or Require) Year-Round Notes: Managers (and employees themselves) should document performance highlights, challenges, and feedback as they occur. This can be as simple as a “performance journal” feature in your HR system, or periodic check-in forms each quarter. When something noteworthy happens – e.g., “Handled upset client on March 5 with great tact, turned situation around” – it gets logged. Later, during the formal review, both parties can look back at these notes. This practice directly counters faulty memory and recency bias [35]. It also helps with “spillover bias,” because improvements over time are visible in the record, enabling the manager to update their view of the employee. Some organizations set up systems where employees can also input their accomplishments or feedback received, which then can be referenced (this empowers employees to make sure their successes aren’t forgotten).

- Frequent Check-ins and Mini-Reviews: Rather than a single annual review, many companies now do quarterly or monthly one-on-one check-ins focused on performance and goals. According to HR industry research, 41% of organizations have shifted toward more frequent one-on-one meetings instead of purely annual reviews [36] [37]. These meetings should be low-key (not scoring events), but they provide timely feedback and course correction. The benefit for fairness is twofold: (1) The manager gets in the habit of reviewing performance regularly, making them more familiar with the employee’s work over time (so they’re less likely to forget things). (2) By the time the formal review happens, there should be no big surprises – issues and wins have been discussed along the way, leading to a more accurate final evaluation. Continuous conversations also mean if an employee had a rough patch and then improved, the improvement will have been noted and acknowledged, not overshadowed by the rough patch alone.

- Use the System’s Data and Analytics: Modern performance management tools record various data points: goal completion status, competency ratings from mid-year, peer feedback, etc. When review time comes, leverage these data. For instance, if your tool tracks goals, the manager can pull up a report of the employee’s goal progress over the year and clearly see that, say, 8 out of 10 goals were met by September, etc. This objective data acts as a check on subjective impressions. Analytics can also highlight patterns like: does the manager tend to rate everyone lower in the annual review than they did in mid-year? If so, why? Some advanced systems (powered by AI) even flag possible recency bias – e.g., if a person’s early-year high performance isn’t reflected in the final rating, the system might prompt the manager with a reminder or a discrepancy report [38]. In fact, companies that adopt continuous, data-driven performance management report very positive outcomes: in one study, 91% of companies that moved to continuous feedback said they now have better data for decision-making and have reduced bias and subjectivity in promotions [39].

- Avoiding Only Backward-Looking Reviews: Another interesting insight from research: employees find it fairer when evaluations consider their progress over time (how much they’ve improved or learned) rather than just a static comparison to others [40] [41]. In other words, if you frame reviews as “here’s how you grew since last time” (a temporal comparison) rather than “here’s where you rank among peers” (a social comparison), employees perceive it as more individualized and just [42]. Regular check-ins make it easier to adopt that mindset of tracking individual progress. So when you deliver the final review, you can say, “Look at how far you’ve come on X skill since January – that improvement is factored into your evaluation.”

- Prevent Surprises and Anxiety: From an employee relations perspective, continuous feedback greatly reduces the anxiety and sense of unfairness around reviews. If feedback is only given annually, the high stakes and pent-up unknowns make people nervous (not to mention, managers may drop bombshell criticisms that feel unfair because they were never mentioned before). But if feedback is continuous, the final review is more of a culmination of ongoing dialogue. This transparency makes the process feel more fair and collaborative. In fact, companies like Adobe, Dell, Deloitte, and others famously scrapped the annual review in favor of frequent check-ins, and they saw improvements in engagement and reductions in voluntary turnover as a result [43] [44]. Deloitte reported that moving to a continuous model led to better-quality conversations and data, and managers could spend more time coaching rather than filling forms [45].

To implement this, ensure your performance management approach (and tools) support easy logging of feedback and notes. For example, SuiteVal offers a “Check-In” feature where managers can record brief notes about an employee’s progress at any time, and also schedule regular light-weight review meetings. All those notes automatically roll up into the employee’s profile, so at review time, it’s trivial to look back. SuiteVal can even provide a summary of the year’s feedback for the manager to review while completing the evaluation, ensuring nothing is overlooked.

Training and Awareness: Equipping Managers to Avoid Bias

No tool or process alone can guarantee fairness if the people executing it aren’t on board. Training managers to recognize and overcome their biases – and to use the tools and data available to them – is a critical piece of the puzzle. After all, managers are the ones actually writing the reviews and having those conversations. Increasing their skill and awareness can dramatically improve the fairness and quality of evaluations across the board.

Key elements of an effective training and awareness program include:

- Bias Awareness Education: It starts with helping managers understand what biases are and that everyone has them. Many managers believe they are objective and fair (and they intend to be), so it can be eye-opening to learn about common rater biases (like those we discussed earlier) and see examples of how they manifest. Share research, like the finding that 62% of rating variance is due to who the rater is, not the employee [46] – this often gets managers’ attention that “wow, bias could be creeping in if I’m not careful.” Harvard Business Review notes that performance criteria being ambiguous allows implicit biases to creep in [47] – use such insights to stress why following structured criteria is important. The goal is not to accuse anyone of being biased, but to make them curious and vigilant: “Hmm, could I be falling prey to recency or affinity bias here? Let me double-check myself.” Good bias training often includes self-reflection exercises that reveal one’s own assumptions or hesitations (sometimes called “a-ha” activities that make biases visible in a non-accusatory way) [48].

- Skill Building in Objective Evaluation: Beyond awareness, managers need concrete techniques for fairness. Training should cover how to evaluate performance using evidence and data. For example, teach managers to cite specific examples in reviews to back up ratings, and to stick to behaviors and results rather than personal traits. If your appraisal form or SuiteVal tool requires them to enter notes or examples for each rating, reinforce that this isn’t just bureaucracy – it’s to ensure fairness by grounding the review in evidence. Also, train on goal setting and feedback: performance starts with clear expectations, so managers should collaboratively set goals and KPIs with their team at the start of the cycle (and document them), which then serve as an objective basis for review. When managers learn to “say it with data,” their reviews naturally become fairer.

- Using the Full Toolkit: Ensure managers are comfortable with the tools or processes you’ve put in place: do they know how to use the performance system to record notes continuously? Have they been taught how to incorporate 360 feedback into their appraisal (e.g., how to reconcile if peer feedback differs from their view)? Do they know the calibration process and trust it? Sometimes unfairness happens simply because a manager didn’t use the resources available – e.g., they didn’t take any notes all year and then had to rely on memory. Emphasize and train the behaviors you want: “We expect you to have at least X check-ins with each employee and log brief notes – here’s how to do that.” Consider incentivizing or at least recognizing managers who are diligent in these practices.

- Unconscious Bias Mitigation Strategies: Provide managers with tips to check themselves during evaluations. For instance, the SPACE2 model from inclusion experts suggests tactics like Slowing down, Perspective-taking (imagine how others would view this person’s work), Asking oneself about potential biases (“Would I give this same feedback if this person were of a different gender/race?”), and so on [49] [50]. Even simple prompts like those we mentioned (“Did I consider the whole year? Have I been consistent with the criteria?”) can be taught as a mental (or written) checklist each manager should go through. Another important concept: contrast effect – if a manager just reviewed a superstar, do they then unfairly toughen up on the next average employee by contrast? Training can raise awareness of such sequencing effects and suggest perhaps shuffling review order or resetting criteria fresh for each employee.

- Accountability and Calibration in Training: Let managers know that their submitted reviews will be reviewed (and calibrated) for consistency and fairness. This isn’t to scare them, but to underscore that fairness is a company priority. When managers know that any outlier ratings will need to be explained in calibration, they’ll likely think twice and ensure they have justification. Some companies even share back with managers some analytics after the cycle, like “Your rating distribution vs the company average” – which can prompt self-reflection if they were an outlier. Incorporate these expectations into manager training: e.g., teach them how to present an employee’s case during calibration, which inherently teaches what kind of evidence or rationale is expected.

- Emphasize Coaching Over Judging: A mindset shift helps fairness too. Encourage managers to approach performance management as coaches rather than judges. A coach’s job is to help the employee improve continuously, which means giving feedback fairly and frequently, not sitting in judgment at year-end. When managers internalize that their role is to develop talent, they are more likely to want a fair and accurate appraisal (because you can’t coach well if your feedback is off-base or biased). This also means managers should be trained to discuss reviews in a way that the employee feels it’s fair and constructive – for instance, focusing on future growth (“What can we do to help you succeed?”) rather than dwelling on personal critiques. Managers with strong coaching skills naturally conduct fairer reviews [51] because they engage employees in the process and avoid one-way, possibly biased pronouncements.

Finally, consider making bias and fairness training an ongoing effort, not a one-time workshop. For example, send out a quick “fairness tip” email series during review season (reminding about common biases and checklists). Update training with new insights (maybe from post-review surveys or new research). Keep the dialogue open: encourage managers to discuss challenges they face in being fair, perhaps in manager forums or with HR, so they can learn from each other. A culture of continuous learning in this area will sustain the improvements.

Fostering Transparency and Trust in the Process

Wrapping all these elements together is the principle of transparency. A fair process isn’t just fair in how it’s done; it’s also perceived as fair by employees. Building trust requires being open about how performance evaluations are conducted and showing employees that the system truly is merit-based.

Here are some ways to increase transparency and trust:

- Communicate the How and Why: Don’t keep your performance management practices a mystery. Explain to all employees how the review process works, including the steps you’ve taken to ensure fairness. For example, you might inform staff: “This year, we’re including a calibration step where managers meet to ensure consistent standards across teams, and we’re adding an anonymous peer feedback component to incorporate diverse perspectives.” When people understand that these mechanisms (calibration, 360, etc.) are in place, they are more likely to trust the outcome. Also communicate the criteria and rating scale ahead of time (ideally at the start of the year or cycle). Everyone should know what the evaluation criteria are and what the ratings mean. This way, the review isn’t an opaque judgment but a clear comparison against known expectations [52].

- Invite Employee Involvement: Involve employees in their own evaluation process. Many companies now have employees do self-assessments as part of the review. This lets employees voice their accomplishments and perceptions, and when discussed side-by-side with the manager’s review, it can highlight discrepancies to talk through. While self-assessments can have their own biases (some people are too modest, others overestimate), the mere act of including the employee’s voice makes the process feel more two-way and fair. Another approach is to allow employees to suggest who should provide 360 feedback on them, which gives them a sense of agency and trust in the process. And of course, if an employee disagrees with an evaluation, have a clear, professional way to discuss or appeal it – even if the rating ultimately stands, being heard matters for fairness perception.

- Show Your Work (within reason): Managers should be prepared to explain the rationale behind a review. When delivering the performance review to the employee, a manager ought to cite examples and data (“You met 4 of 5 goals, and here’s the progress on the 5th; your customer satisfaction average was 4.2 which is slightly above the company benchmark of 4.0”). Encourage managers to share some of the peer feedback highlights (“Three of your peers noted how you stepped up to help on Project X – great job”). When an employee sees that the rating or feedback is tied to real evidence and multi-source input, it feels legitimate. Contrast that with a generic “You need to improve” with no explanation – the latter breeds frustration and assumptions of bias (“maybe they just don’t like me”). Of course, managers must be tactful and not divulge any confidential peer identities, but they can convey the substance of feedback. If your system allows, showing the employee a summary of their 360 feedback or goal report can be very effective. Some companies even share calibration outcomes in aggregate (like “this year, 15% of employees were rated as exceeds, 70% meets, 15% needs improvement after calibration” – which helps employees see it wasn’t random).

- Monitor and Share Progress: Use analytics to monitor if your fairness initiatives are working. For example, track if rating distributions between departments are getting more uniform over time, or if any demographic group is consistently scoring lower/higher after controlling for other factors. If you identify an issue (say, one department’s scores are still off pattern), address it – and possibly be transparent about the correction (“We noticed inconsistency in X, so we are adjusting Y”). Many modern systems, including SuiteVal, facilitate slicing and dicing performance data to find such patterns [53]. Transparency can extend to leadership: for instance, HR could report to executives, “We achieved 100% manager participation in bias training and calibration this cycle, and employee survey results on perceived review fairness went up 10%.” Over time, when employees see that HR and leadership are actively ensuring fairness (and hearing feedback about the process itself), trust grows.

- Consistency and Follow-Through: Finally, to build trust, you must consistently apply these fair processes. If you do calibration one year and not the next, or only favor certain departments with 360, you risk perceptions of unfairness in the system itself. Stick with the program, refine it with employee input, and always follow through on what you say you’ll do (e.g., if you promise continuous feedback, managers need to actually do it, or employees will rightly call out the lip service). Fairness in performance evaluation isn’t a one-time project; it’s an ongoing commitment and part of the culture. But the payoff is worth it: employees who believe the system is fair are more engaged and more committed to their goals [54], and they trust their leaders more. As one MIT Sloan study put it, when employees feel procedures and outcomes are fair, their commitment to the organization soars. It’s about creating a positive cycle of trust and performance.

Conclusion: Fair Evaluations Build a Better Workplace

Achieving truly fair, consistent, and bias-free evaluations is a challenge – but one worth tackling. By now, a few themes should be clear:

- Structure and Data trump Subjectivity: When you ground your performance reviews in structured criteria, objective data, and multiple viewpoints, you leave much less room for bias. You’re essentially engineering fairness into the process.

- Deliberate Processes (Calibration, 360, Continuous Feedback) Pay Off: These processes require effort and the right tools, but they directly address the common failure points of traditional reviews. They ensure that no single manager’s quirks or memory lapses dictate an employee’s fate. Instead, performance evaluation becomes a more democratic, year-round, and evidence-based practice.

- Education and Culture Matter: Tools and processes alone don’t solve everything. Training managers and fostering a culture that values fairness are indispensable. When managers become coaches and allies in performance improvement, and employees see that, the whole dynamic shifts from adversarial to collaborative.

- Transparency Builds Trust: Employees will trust the performance review system if they can see it’s fair. Over time, as you demonstrate consistency and invite employee involvement, skepticism gives way to buy-in. People start to believe “Yes, this process is actually here to help me, not hurt me.”

The benefits of fair and bias-free evaluations extend beyond just the review process itself. They ripple out to the entire organization: higher employee engagement, better talent retention, more equitable promotions, and a reputation as a meritocratic workplace. In a climate where attracting and keeping talent is tougher than ever, having a trusted performance management system is a competitive advantage. Conversely, if employees smell bias or politics in evaluations, it undermines your diversity and inclusion efforts and sends people running for the exit – as we saw with the striking statistic that 4 out of 5 would consider leaving after an unfair review [55].

By implementing the strategies discussed – from calibration meetings to bias training to leveraging SuiteVal’s platform for structure and analytics – you are proactively closing the door on bias. You’re saying we commit to evaluating our people based on merit, consistently and fairly. That speaks volumes to your team.

Ready to Elevate Your Performance Reviews?

If you’re an HR leader or decision-maker looking to put these ideas into action, consider how SuiteVal can assist. SuiteVal was built with fairness and consistency at its core. Whether it’s ensuring every manager uses the same criteria, making 360° feedback easy, highlighting potential biases, or facilitating calibration, SuiteVal provides the infrastructure for a world-class, fair review process.

✅ Transform performance appraisals from a source of frustration into a driver of trust and excellence.

Request a DemoIn the end, ensuring fair, bias-free evaluations is not just about avoiding negatives – it’s about creating a positive, empowering environment. When employees know that their hard work will be noticed and judged impartially, they’re more motivated to excel. When managers have confidence in the system, they invest more in developing their people. It’s a win-win that can propel your organization forward. Fair evaluations are the foundation of a performance culture where everyone can thrive – and with the right approach, they are absolutely within reach.

[1]Eliminating bias from performance appraisals – Human Capital Hub

[2]17 Mind-Blowing Performance Management Statistics | ClearCompany

[3]Performance Management Statistics: What 2025 Holds for HR Leaders

[4]Performance Management Statistics: What 2025 Holds for HR Leaders

[5]Eliminating bias from performance appraisals – Human Capital Hub

[6]What’s Wrong With Performance Reviews? | Psychology Today

[7]7 Sources of Performance Review Bias and How to Fix Them

[8]7 Sources of Performance Review Bias and How to Fix Them

[9]7 Sources of Performance Review Bias and How to Fix Them

[10]7 Sources of Performance Review Bias and How to Fix Them

[11]7 Sources of Performance Review Bias and How to Fix Them

[12]7 Sources of Performance Review Bias and How to Fix Them

[13]Eliminating bias from performance appraisals – Human Capital Hub

[14]7 Sources of Performance Review Bias and How to Fix Them

[15]7 Sources of Performance Review Bias and How to Fix Them

[16]Eliminating bias from performance appraisals – Human Capital Hub

[17]Eliminating bias from performance appraisals – Human Capital Hub

[18]Eliminating bias from performance appraisals – Human Capital Hub

[19]Eliminating bias from performance appraisals – Human Capital Hub

[20]Eliminating bias from performance appraisals – Human Capital Hub

[21]Eliminating bias from performance appraisals – Human Capital Hub

[22]Eliminating bias from performance appraisals – Human Capital Hub

[23]Why Most Performance Evaluations Are Biased, and How to Fix Them

[24]Eliminating bias from performance appraisals – Human Capital Hub

[25]Eliminating bias from performance appraisals – Human Capital Hub

[26]Eliminating bias from performance appraisals – Human Capital Hub

[27]Eliminating bias from performance appraisals – Human Capital Hub

[28]Eliminating bias from performance appraisals – Human Capital Hub

[29]7 Sources of Performance Review Bias and How to Fix Them

[30]Eliminating bias from performance appraisals – Human Capital Hub

[31]Eliminating bias from performance appraisals – Human Capital Hub

[32]Eliminating bias from performance appraisals – Human Capital Hub

[33]7 Sources of Performance Review Bias and How to Fix Them

[34]7 Sources of Performance Review Bias and How to Fix Them

[35]Eliminating bias from performance appraisals – Human Capital Hub

[36]Performance Management Statistics: What 2025 Holds for HR Leaders

[37]Performance Management Statistics: What 2025 Holds for HR Leaders

[38]Eliminating bias from performance appraisals – Human Capital Hub

[39]Eliminating bias from performance appraisals – Human Capital Hub

[40]Eliminating bias from performance appraisals – Human Capital Hub

[41]Eliminating bias from performance appraisals – Human Capital Hub

[42]Eliminating bias from performance appraisals – Human Capital Hub

[43]Eliminating bias from performance appraisals – Human Capital Hub

[44]Eliminating bias from performance appraisals – Human Capital Hub

[45]Eliminating bias from performance appraisals – Human Capital Hub

[46]What’s Wrong With Performance Reviews? | Psychology Today

[47]Why Most Performance Evaluations Are Biased, and How to Fix Them

[48]Eliminating bias from performance appraisals – Human Capital Hub

[49]Eliminating bias from performance appraisals – Human Capital Hub

[50]Eliminating bias from performance appraisals – Human Capital Hub

[51]The fairness factor in performance management | McKinsey

[52]Eliminating bias from performance appraisals – Human Capital Hub

[53]Eliminating bias from performance appraisals – Human Capital Hub

[54]The fairness factor in performance management | McKinsey

[55]17 Mind-Blowing Performance Management Statistics | ClearCompany